The Convolution Operation

The First Step Towards Understanding Convolution Neural Networks!

It's essential to understand, or at least have some knowledge of, the convolution operation's workings and nature to comprehend Convolutional Neural Networks (CNNs).

Convolution itself is a core mathematical operation, integral to various domains including signal processing, image processing, and particularly deep learning.

The true power of the convolution operation lies lies in its ability to offer a robust means of observing and characterizing physical systems.

Let's examine the mechanics of this operation!

The Convolution Integral: The Foundation

At its core, the convolution operation for continuous signals is defined by the convolution integral.

For two functions, x(t) and h(t) their convolution is given by:

Hence, lambda is a dummy variable and the asterisk denotes the convolution operation. A key property of convolution is that it's commutative:

This means we can also write the integral as:

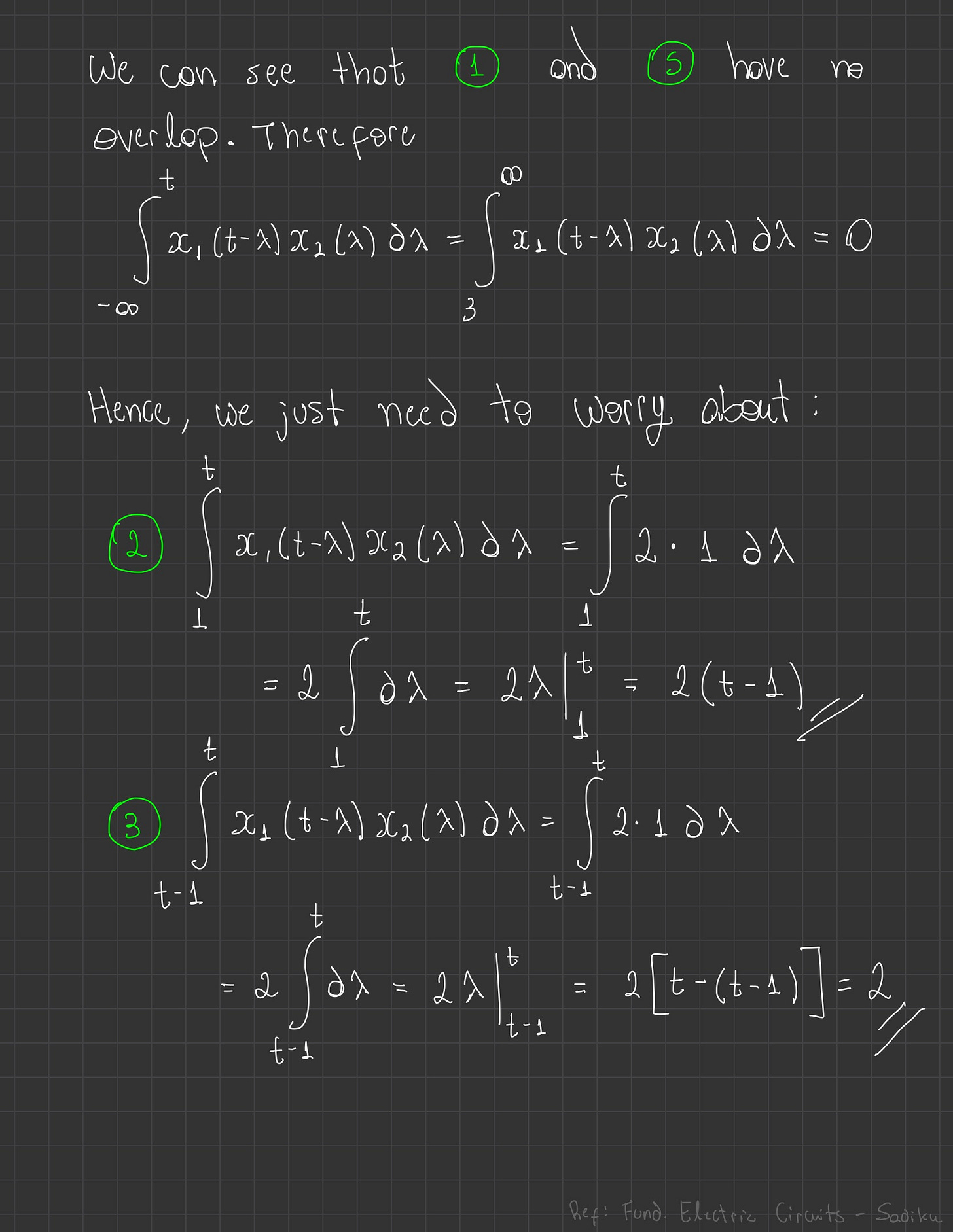

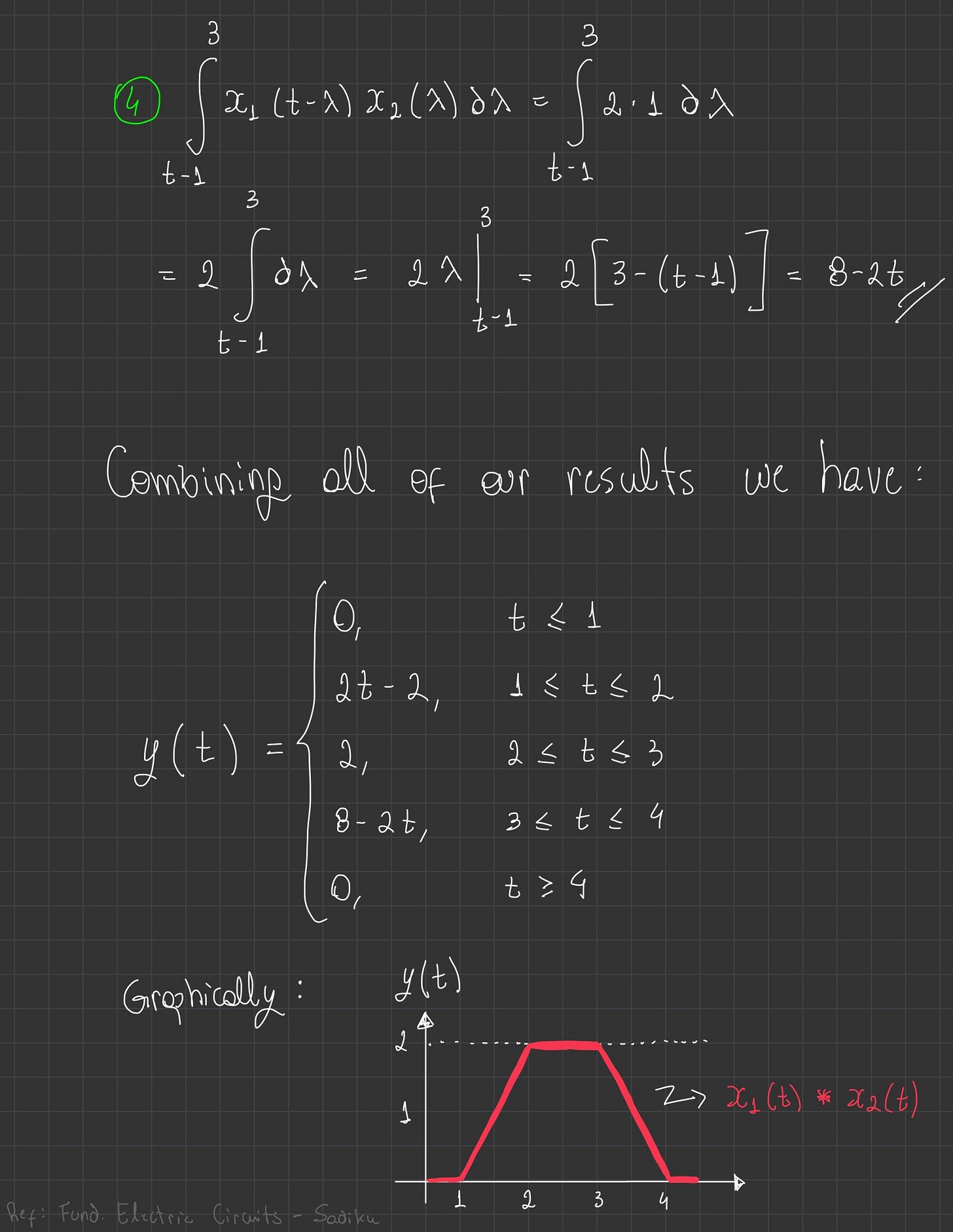

The convolution of two signals consists of time-reversing one of the signals, shifting it, and multiplying it point by point with the second signal, and integrating the product.

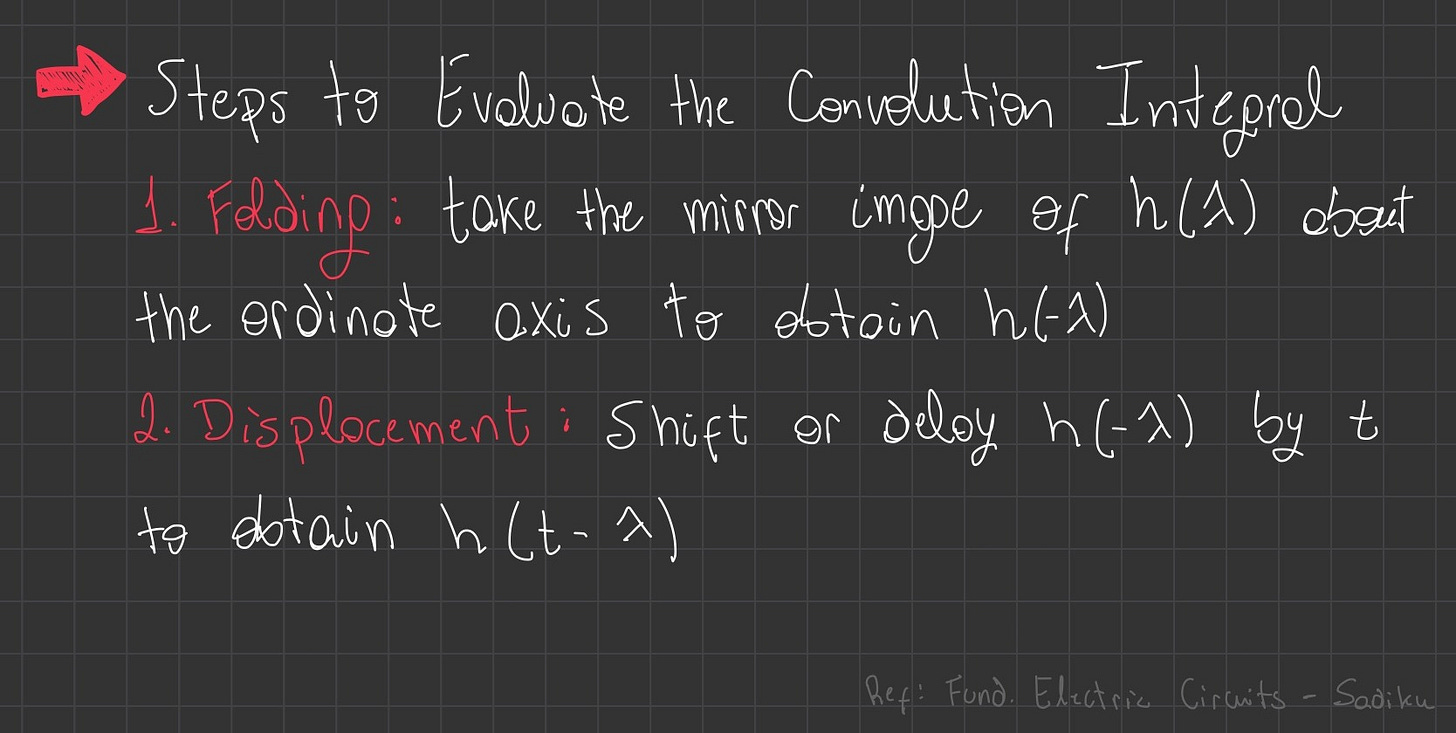

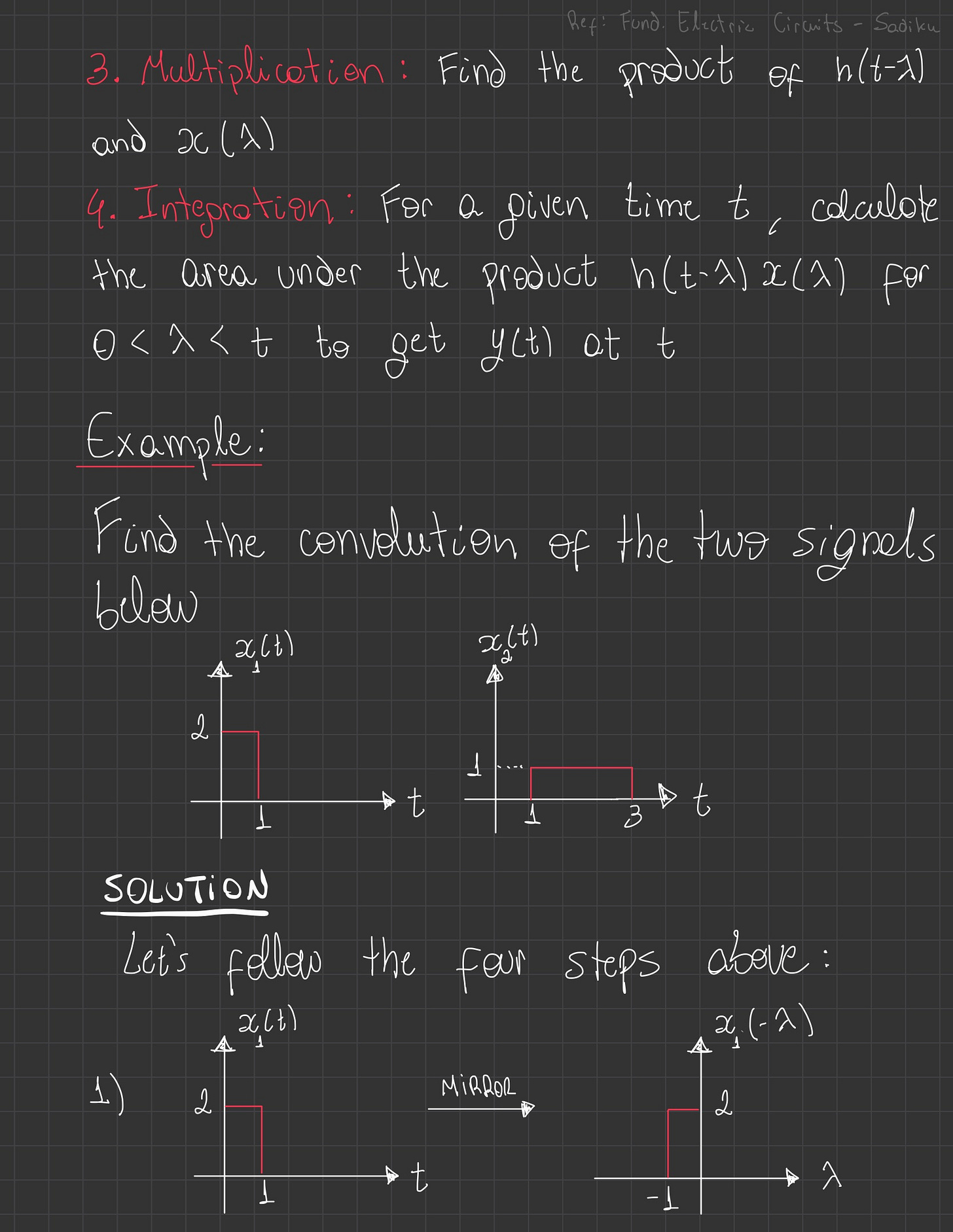

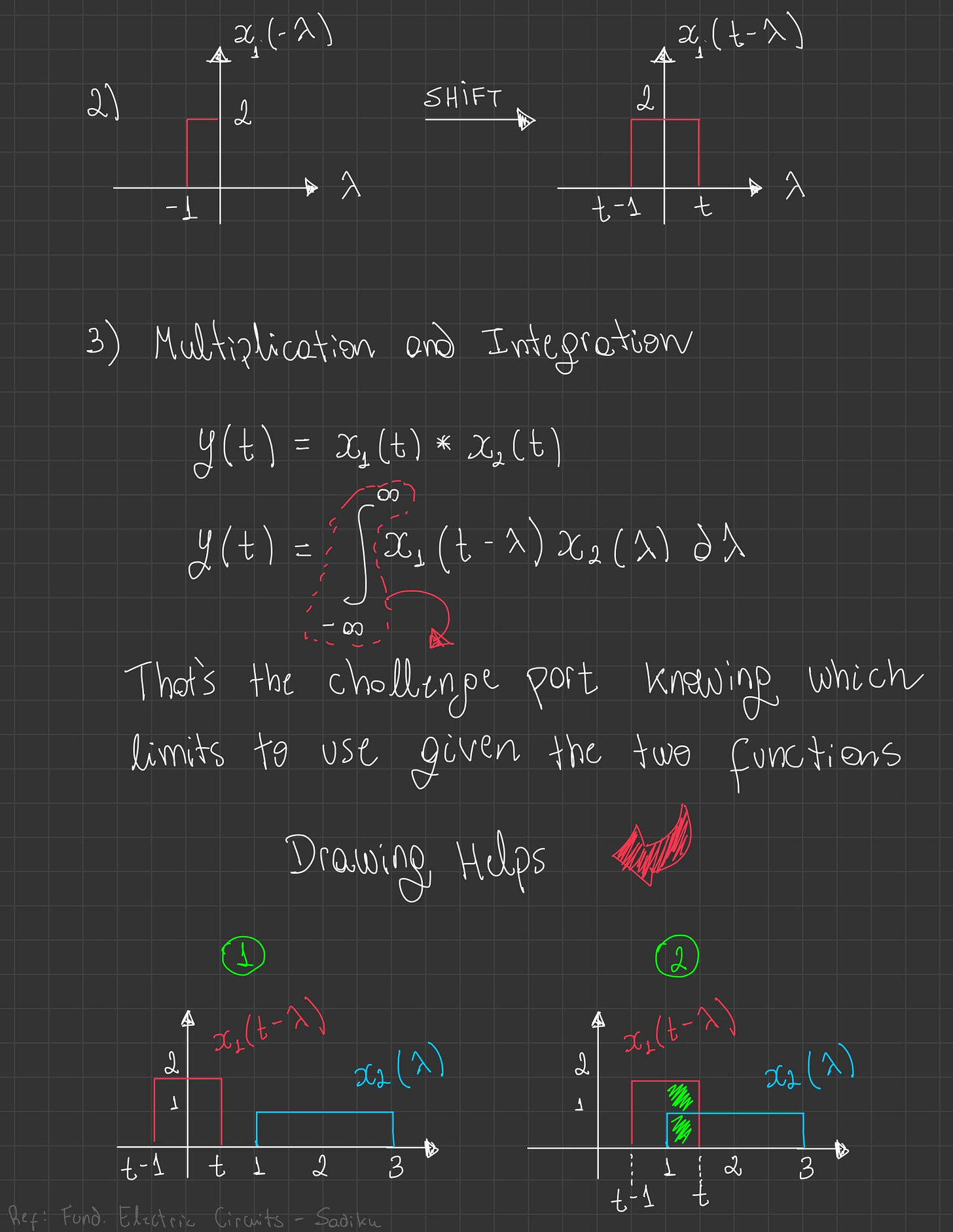

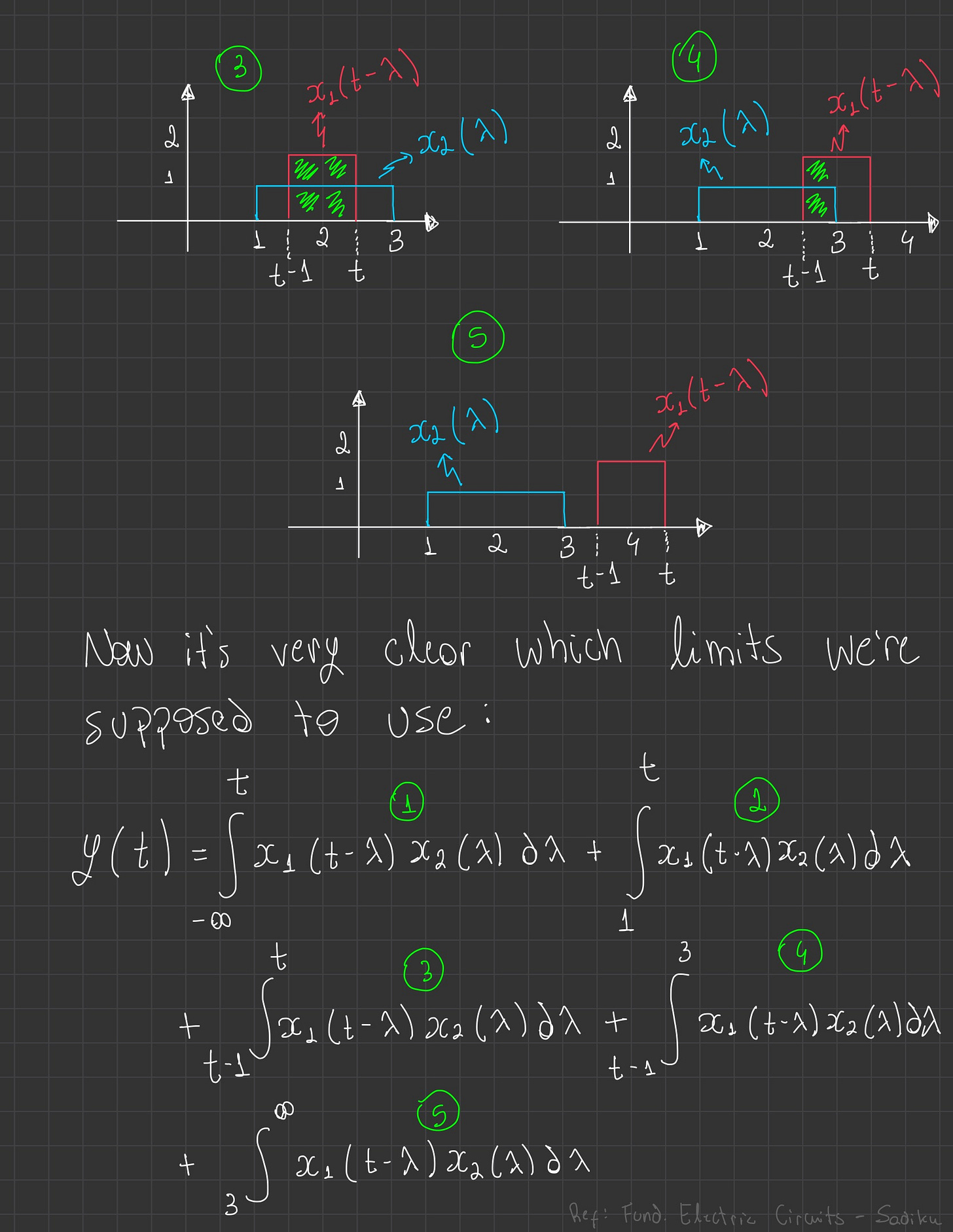

Steps to Evaluate the Convolution Integral

Folding → First, we take the mirror image of one of the signals about its origin.

Displacement → Next, we shift or delay this folded signal across the other, original signal by a certain amount, which we'll call 't'.

Multiplication → Then, at each specific displacement 't', we find the product of the corresponding values between the folded and displaced signal and the original signal.

Integration → For that given time t, we effectively calculate the area under this product of the two signals. This gives us a single output value, y(t), for that particular 't'. We repeat this entire process for all possible 't' values to construct the complete output signal.

The images below give an step by step example on how this process works.

CNN Terminology and The Discrete Case

Since our ultimate interested is to understand CNNs we it's important to highlight some possible key terms one might encounter. Given the equation below

Then:

S(t) → feature map

x(t) → input

w(t) → kernel

For the discrete case we have a very similar equation:

The Code Implementation

The equation above now allow us to conceive a programming code for the convolution operation. That is:

Some unfamiliar vocabulary was presented in the code above. We should now analyze these carefully:

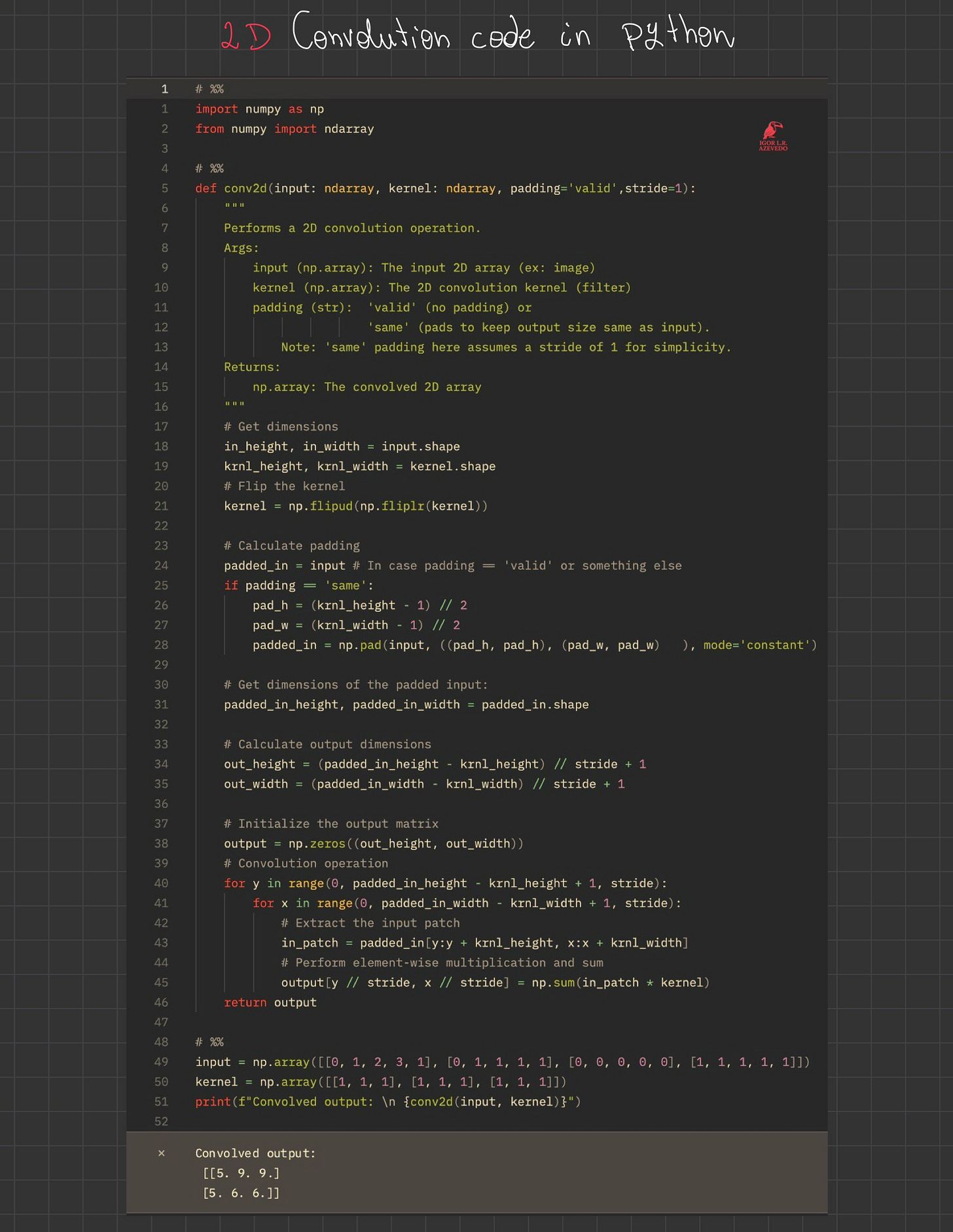

Stride → is an additional concept from computer vision and deep learning to control how much the filter moves over the input at each step

Without stride (stride == 1)

Compute convolution at every position

With Stride (stride == S > 1)

Skip some positions

Compute convolution only at every Sth position

Padding → Sometimes the convolution operation reduces the size of the output.

When we want to preserve the same size at the edges we use padding

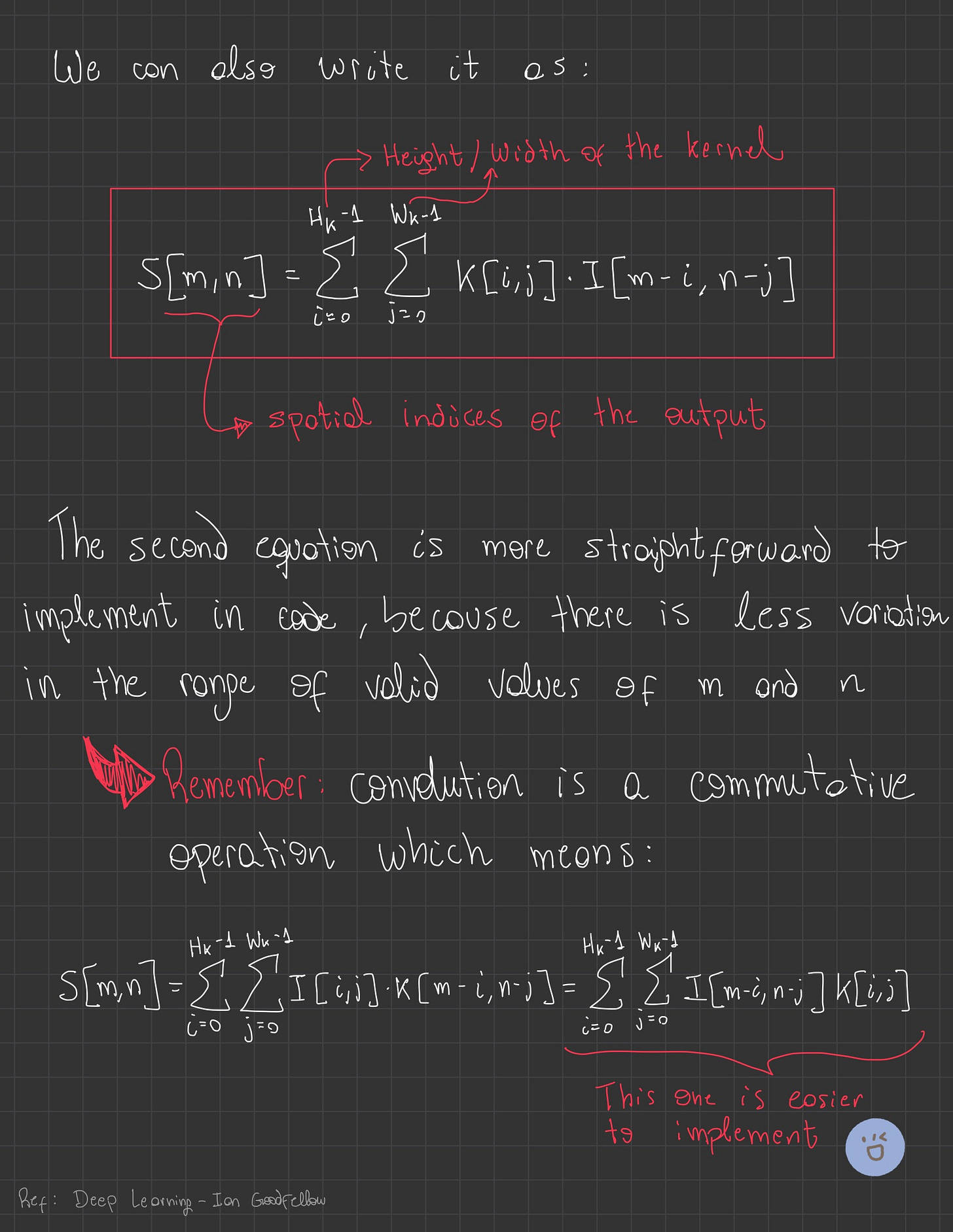

2D Convolution

The 2D convolution is given by

As the image below demonstrates, this equation can also be expressed with different notations, and it's important to recall that the 2D convolution operation is also commutative.

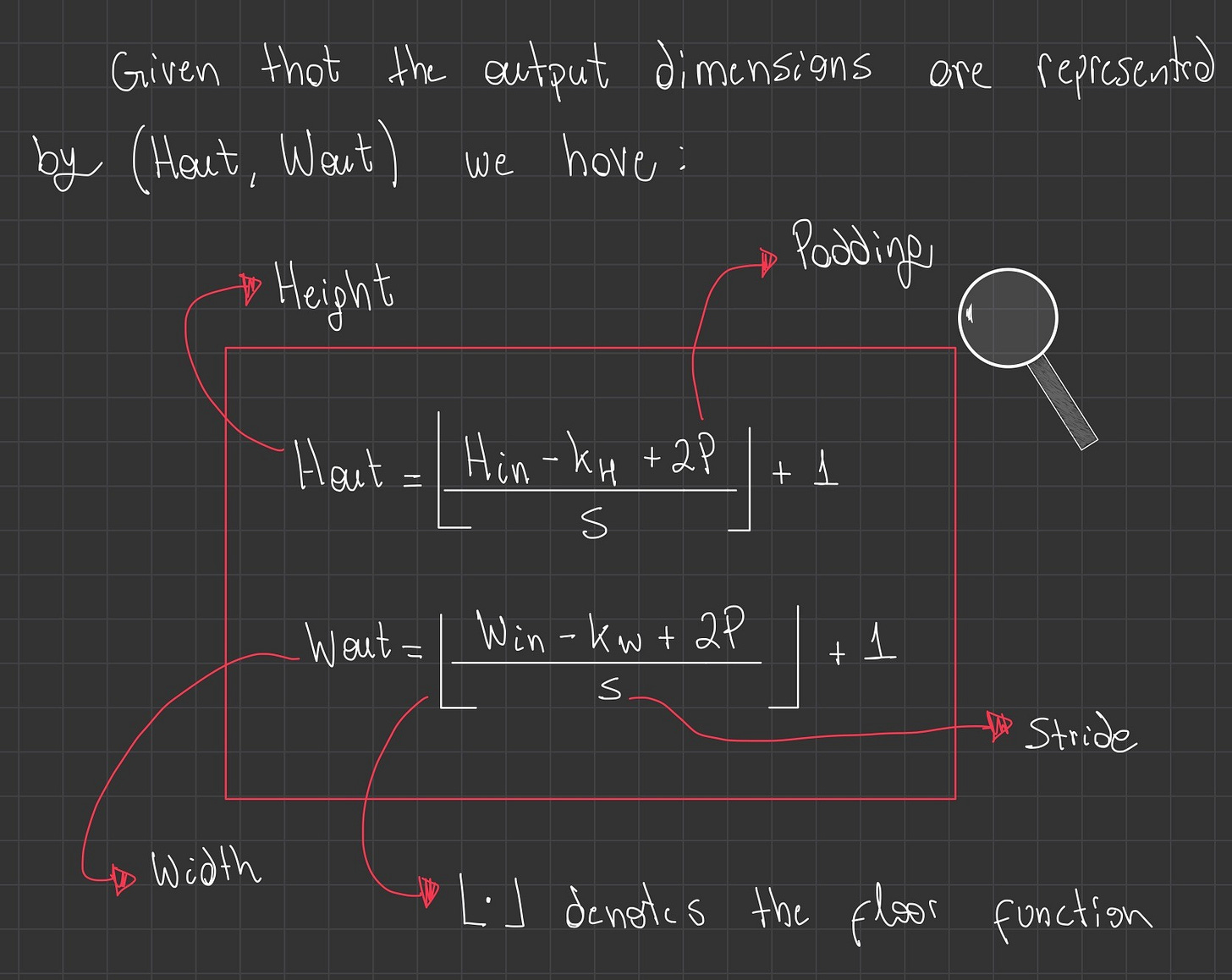

We should also highlight the method for calculating the output size of a 2D convolution.

The image presented below details how to calculate the height and width of the output matrix.

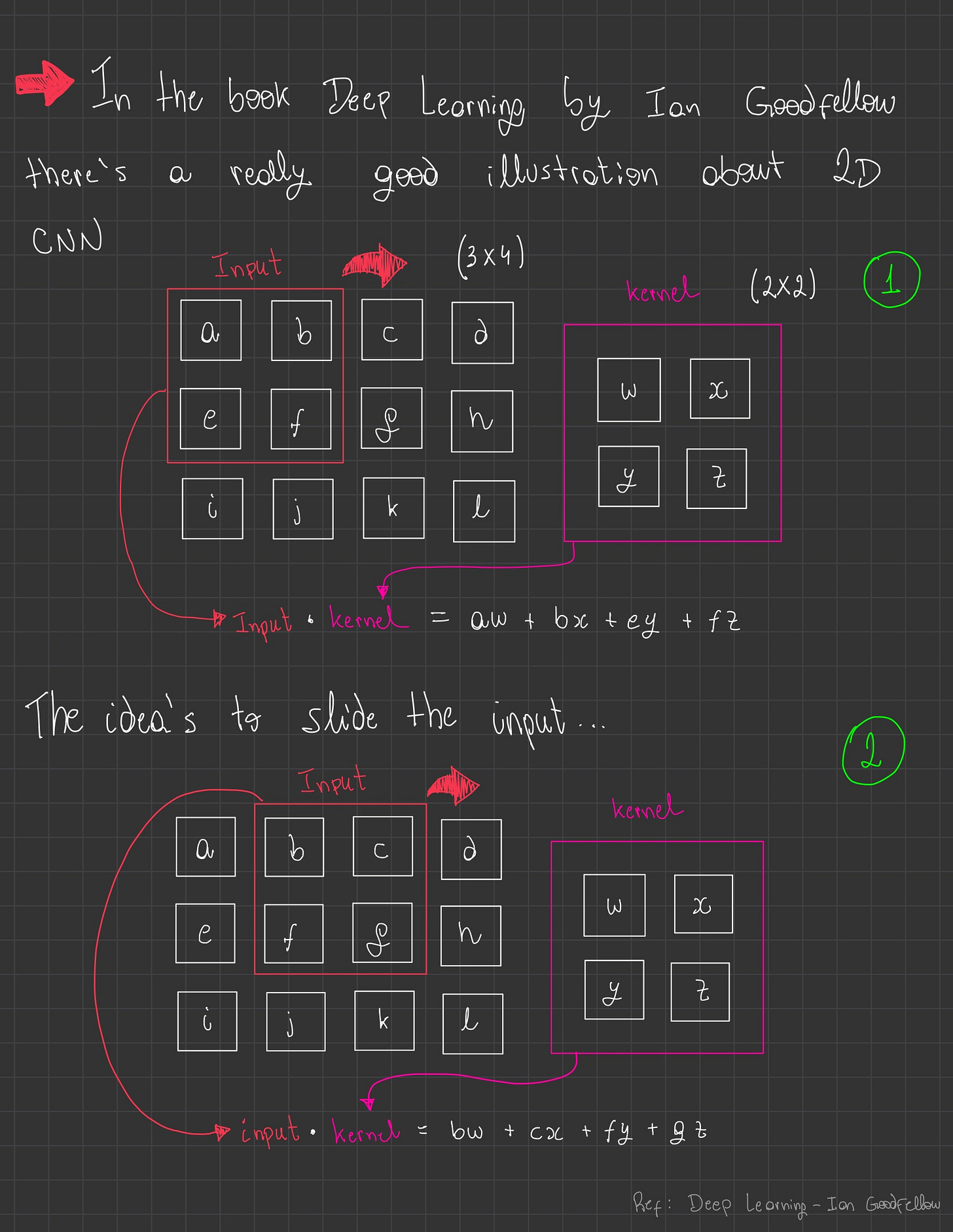

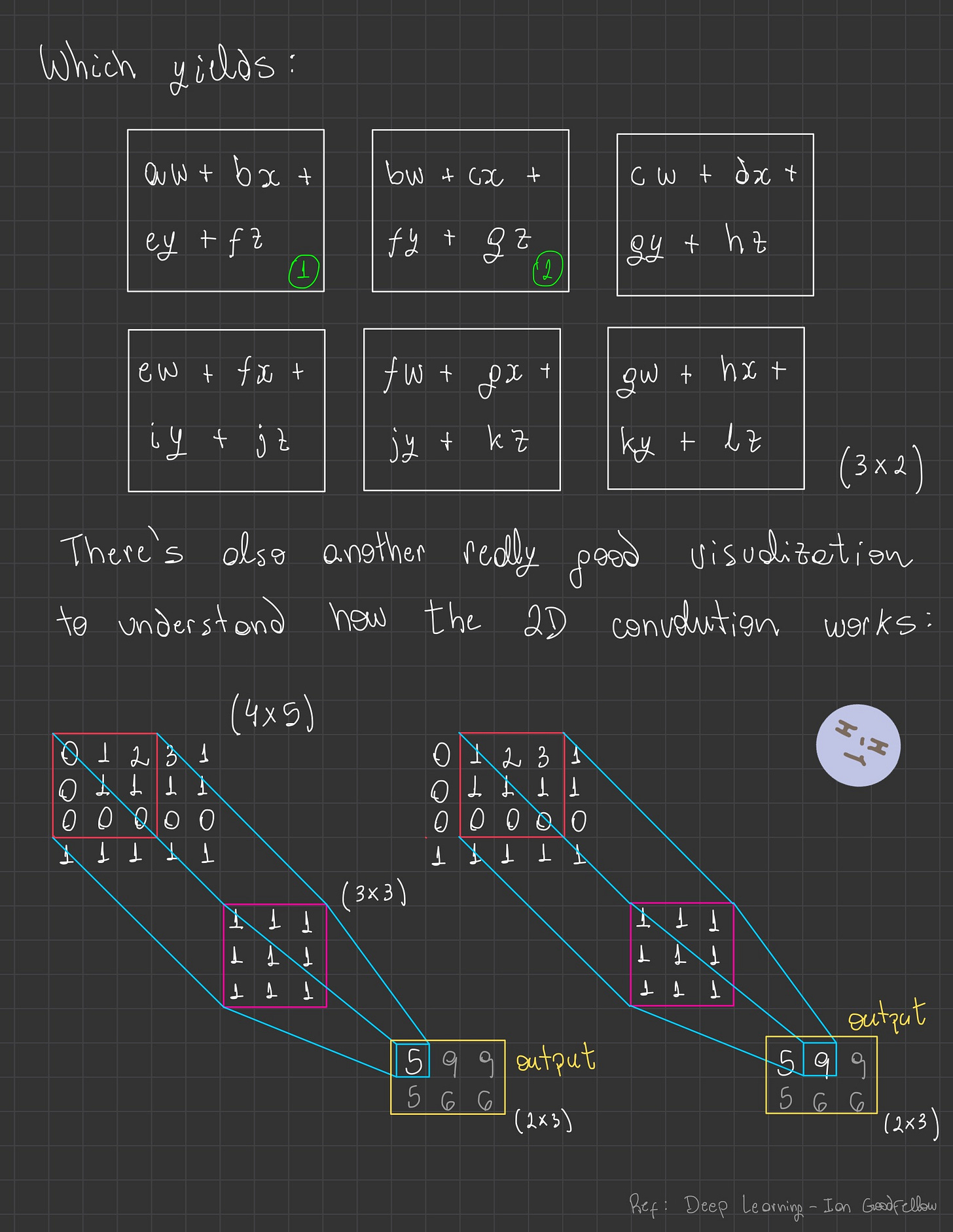

In simple words, convolution works by sliding a small matrix called a kernel (or filter) over a larger 2D input, typically an image. At each position, it performs:

Element-wise multiplication: The kernel's values are multiplied by the corresponding overlapping pixels in the input.

Summation: All these products are summed up to produce a single output pixel value.

This process is repeated across the entire input, effectively transforming it by highlighting or modifying features based on the kernel's design (e.g., edge detection, blurring, sharpening).

Padding and stride can be used to control the output size and how the kernel moves.

Illustration

To clarify the workings of the 2D convolution operation, please refer to the images below.

The Code Implementation

Finally, let's now code the 2D convolution operation in Python:

Summary

Convolution in General

Convolution is a fundamental mathematical operation that combines two functions (or signals) to produce a third, modified function.

Conceptually, it describes how the shape of one function is "blended" or "filtered" by another.

This operation is crucial in diverse fields like signal processing, image processing, and statistics, as it allows us to analyze system responses, extract features, or apply filters.

1D Convolution

In one dimension, convolution operates on sequential data, such as time series or audio signals.

It involves sliding a small "kernel" (a set of weights) along the input sequence. At each step, the kernel's values are multiplied element-wise by the overlapping segment of the input, and these products are summed to generate a single output value.

This process is repeated to create a new, transformed sequence, useful for tasks like signal smoothing or identifying patterns over time.

2D Convolution

Extending to two dimensions, 2D convolution is primarily used for spatial data like images.

Here, a 2D kernel (or filter) slides over the input image. For each position, it performs element-wise multiplication between the kernel and the corresponding image patch, then sums these products to produce a single pixel in the output image (often called a feature map).

This operation is at the heart of many image processing tasks, enabling capabilities like edge detection, blurring, sharpening, and forming the core of Convolutional Neural Networks (CNNs) for image analysis.

✨ The End

If you’ve read this far…

First of all, thank you! 🙏

I hope this post helped clarify a few things and made your Deep Learning journey a bit easier.

More deep dives are on the way, so stay curious and keep building!

“I learned that courage was not the absence of fear, but the triumph over it. The brave man is not he who does not feel afraid, but he who conquers that fear.”

― Nelson Mandela

Until next time,

Igor L.R. Azevedo